RPA vs LLM Chains: When Legacy UI Bots Still Beat “Intelligent” Agents

April 8, 2026

Automation headlines in 2026 love large language models: agents that read screens, tools that write their own glue code, chains that call APIs with natural language plans. Meanwhile, plenty of enterprises still run robotic process automation (RPA) bots that click through the same green-screen flows they clicked through five years ago—brittle, unglamorous, and weirdly reliable when the world refuses to offer a clean API.

The honest story is not “LLMs replaced RPA.” It is “different tools for different failure modes.” This article compares the two approaches without mythologizing either, then offers a practical decision lens for teams tired of being sold magic.

We will cover determinism versus flexibility, cost realities, compliance, hybrid designs, and the organizational traps that sink automation programs before the tech even matters.

If you are an engineering leader, you may be asked to “just add AI” to a brittle workflow. If you are an operations lead, you may be asked to “rip out RPA” without funding APIs. Neither mandate is a strategy. This piece equips you to respond with trade-offs, not slogans.

What RPA still is, warts and all

RPA tools automate UIs the way a careful human would: mouse moves, keystrokes, field validation, sometimes OCR. They shine when a vendor offers no stable integration, when the workflow is rule-heavy, and when deviations are rare. They suffer when the UI changes color, when latency spikes, or when pop-ups appear in non-deterministic order.

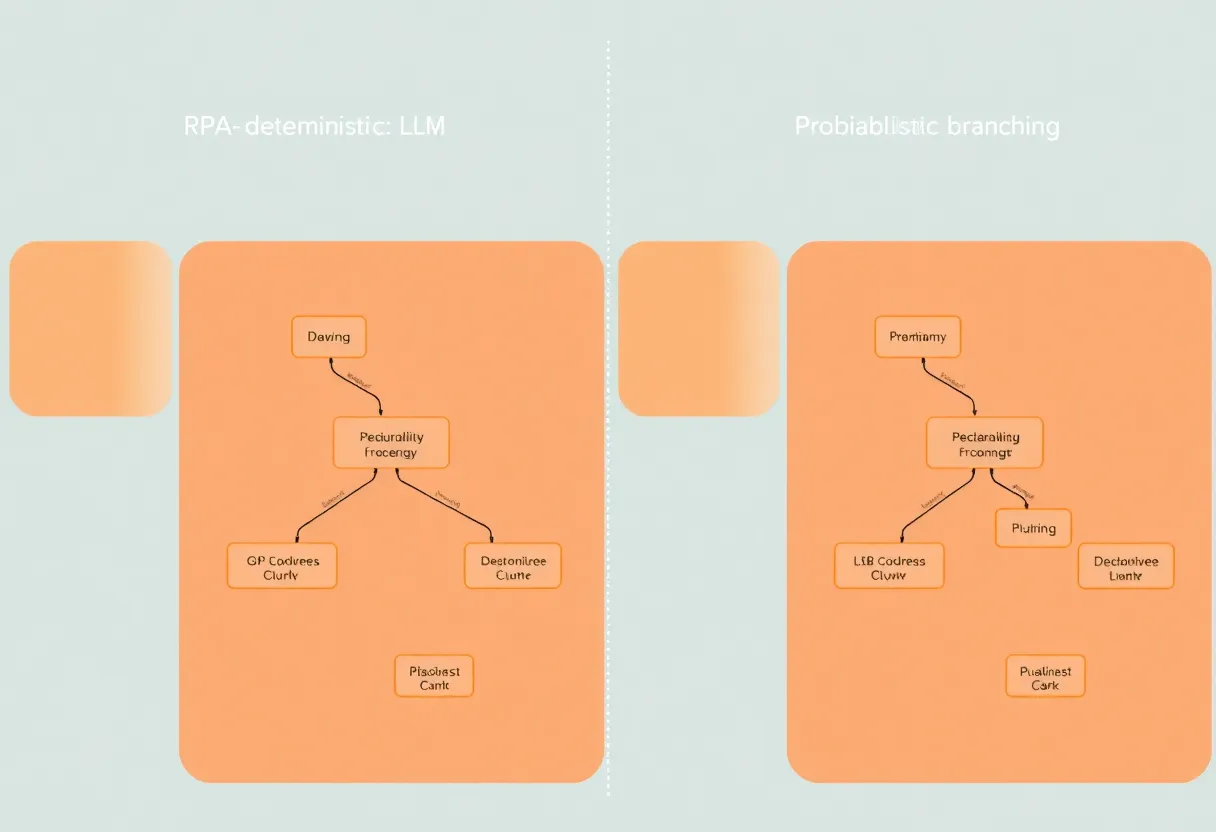

RPA’s superpower is determinism within a narrow lane. Given the same starting state, the same script should do the same thing. Auditors like that story. Operations teams like knowing exactly which step failed.

That determinism breaks when reality gets messy: daylight-saving time shifts, locale-specific number formats, or a banner announcing “new look, same great tools.” RPA teams respond with defensive waits, image recognition fallbacks, and ever-growing if-statements. It is not elegant; it is how enterprises move money when APIs refuse to exist.

What LLM chains add—and subtract

LLM-driven automation promises flexibility: handle varied wording, improvise around surprise dialogs, translate messy instructions into actions. That flexibility is also risk. Models can hallucinate plans, misread subtle UI states, or confidently take the wrong branch. Guardrails help—schemas, tool definitions, human approvals—but they add engineering work RPA rarely needed at small scale.

Cost profiles differ too. RPA runtime is often predictable CPU on a VM. LLM chains meter tokens and may call multiple models across steps. A process run a million times a month turns those differences into finance problems, not lab experiments.

Reliability profiles differ as well. RPA fails loudly when selectors miss. LLMs can fail softly—producing plausible wrong answers—unless you build verification layers. That difference should shape testing: fuzz inputs for models, snapshot UIs for bots.

When legacy bots still win

Regulated workflows with audit trails. If you must prove who did what and when, deterministic scripts with explicit logs can be easier to defend than probabilistic reasoning—even if the logs are boring.

High-volume, low-variance tasks. Copying fields between systems that rarely change beats paying inference on every row.

Air-gapped or data-sensitive environments. Sending screen contents to external models may be non-starters. Local models exist, but ops overhead is real.

Teams without ML muscle. RPA is unglamorous but teachable. LLM systems need eval harnesses, prompt versioning, and failure budgets.

When LLM chains earn their keep

Semantic tasks. Classifying tickets, summarizing exceptions, extracting intent from emails—these are natural language problems where rigid selectors rot quickly.

Rapidly changing UIs. If your SaaS vendor ships weekly layout tweaks, maintaining brittle selectors is torture; vision-plus-tool use might survive longer—at a price.

Orchestration glue. Mapping messy human instructions into API calls can be faster to iterate with models than with endless new RPA branches—if you validate outputs.

Small models versus frontier models

Not every LLM workflow needs the largest flagship. Classification, extraction, and routing often work on smaller, cheaper models with tighter latency—especially when you can constrain outputs with JSON schema or function calling. The winning pattern is selective intelligence: use big models where ambiguity is high, small models where labels are crisp.

Human-in-the-loop as a feature, not a failure

Some processes should never fully automate. Approvals, refunds above thresholds, and anything touching legal wording benefit from checkpoints. LLMs can draft; humans can sign. RPA can push data; humans can confirm. Designing escalation as part of the UX beats pretending confidence where none exists.

Hybrid patterns that appear in the wild

Practical systems often combine layers: RPA for stable mechanical steps, LLMs for interpretation steps, humans for exceptions. The architecture works when boundaries are explicit—where the deterministic script ends and where the model begins—and when you log handoffs faithfully.

Anti-patterns include “LLM everywhere because it is new” and “RPA forever because we already paid licenses.” Both waste money.

API-first escape hatches

The best automation is neither RPA nor LLM—it is a vendor finally shipping an API. Push partners for integrations; use bots as temporary scaffolding. Document which flows are “until API exists” so technical debt does not become folklore.

Observability for bots and chains

RPA platforms often include step-level screenshots and replay; LLM pipelines need tracing for tool calls, token usage, and model versions. Treat both like production systems with SLOs, not side projects. If nobody owns on-call rotation, you do not have automation—you have a pet script that bites people quarterly.

Risk registers worth writing down

For RPA: selector drift, session timeouts, concurrency clashes when two bots fight for the same window. For LLMs: prompt injection via untrusted content, inconsistent JSON, evaluation gaps between staging and prod.

Security teams care about both. RPA accounts with powerful credentials are juicy targets; LLM tools that can exfiltrate data via creative prompts are equally uncomfortable.

Data residency and logging

Screen captures for RPA debugging can accidentally store PII. LLM traces can include user text you should not retain. Define retention policies before you enable “helpful” logging defaults. Privacy teams are happier when you ask early.

How to decide without a committee deck

Ask: Is the task primarily mechanical or primarily semantic? Mechanical favors RPA or direct APIs. Semantic favors models—possibly small, specialized ones rather than frontier giants.

Ask: What is the cost of a wrong action? High-stakes finance movements may need human confirmation regardless of tool.

Ask: Do we have ground-truth tests? If you cannot evaluate correctness, an LLM chain is a slot machine wearing a lab coat.

Total cost math beyond licenses

RPA carries orchestration servers, Windows images, and people who understand legacy apps. LLM chains carry eval teams, red-teaming, and model upgrades. Compare five-year totals, not launch-week demos. Include failure remediation: support hours when bots click “delete” confidently.

Change management is the hidden variable

Automation changes job roles. RPA sometimes feels like headcount replacement; LLM pilots sometimes feel like chaos. Invest in training and clear escalation paths. Tools do not fail only technically—they fail politically.

Maintenance: where both approaches hurt

RPA maintenance is death by a thousand UI tweaks. LLM maintenance is death by a thousand prompt regressions when models update. Budget quarterly reviews for either stack. If you cannot name an owner, you do not have a roadmap—you have a museum exhibit.

Citizen developer programs

Low-code RPA succeeded partly by empowering business analysts. LLM tooling tempts the same impulse with faster demos. Guardrails matter: code review, secrets management, and sandbox environments. Excitement is not a substitute for access control.

Vendor narratives

RPA vendors now bolt on “AI.” Model vendors now offer “agents.” Read integration specs, not keynote slides. Ask what happens offline, what SLAs cover, and how exports work if you leave. Portability still matters when hype cycles turn.

Closing take

RPA bots are not dinosaurs; they are narrow instruments. LLM chains are not universal solvents; they are flexible—and sometimes sloppy. Choose based on variance, volume, compliance, and the quality bar you can enforce. The best automation strategy in 2026 is often boring machinery plus careful intelligence where language actually matters—not intelligence smeared evenly on every button.

Metrics that keep teams honest

Track automation coverage alongside exception rates. If your LLM pipeline “works” but humans fix twenty percent of rows, you have a fancy typing tutor, not savings. Likewise, if RPA uptime looks great but business users rekey data secretly in spreadsheets, your bot is theater.

Measure time-to-recover when upstream systems change. The team with faster recovery wins—even if their initial build was slower. Velocity without resilience is debt.

Finally, compare customer-visible outcomes. Did invoices go out faster? Did tickets close sooner? Did compliance filings stop missing deadlines? Automation exists to move real needles, not dashboards alone.