Monorepos promise one repository, one toolchain, and shared standards across teams. The pitch is coherent: refactor across packages without publishing tarballs to nowhere, keep versions aligned, and let developers navigate a single graph of code. Then CI runs, and the bill arrives. Suddenly the conversation is not about elegant architecture—it is about cache misses, fan-out jobs, and whether your build system is saving time or quietly taxing every pull request.

In 2026, the question is not “Should we use a monorepo?” Plenty of organizations already do. The better question is: when does the tooling stop being a multiplier and start being a drag—and how do you tell the difference before morale and budgets burn?

This piece is written for tech leads and platform engineers who already feel the tension: developers want speed, finance wants predictable spend, and security wants assurance. The answers live in specifics—graphs, caches, and ownership—not in slogans about “developer happiness.”

What monorepos optimize for

At their best, monorepos reduce coordination tax. When interfaces change, you can update callers in the same change set. When security patches land, you can upgrade dependencies across packages with a single policy. When onboarding a new engineer, one clone gets them the whole product surface, not a scavenger hunt across dozens of repositories with mismatched conventions.

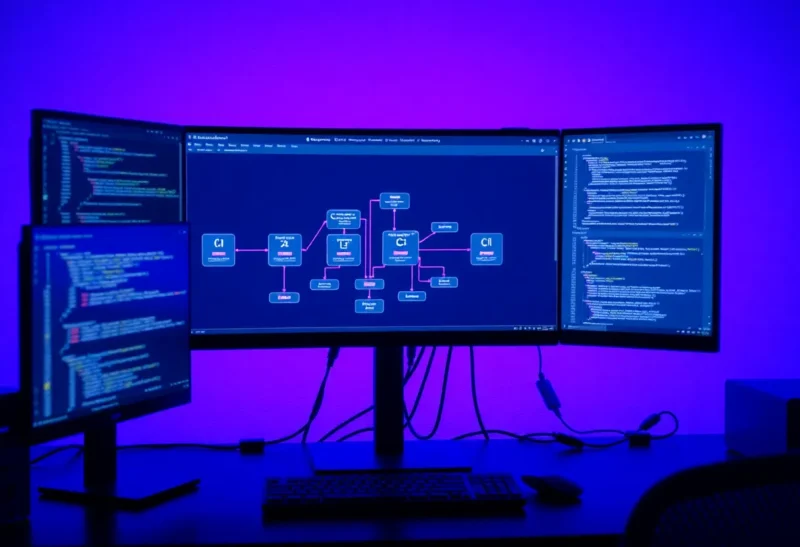

Tooling like Nx, Turborepo, Bazel-style approaches, or bespoke scripts exists to make that scale: incremental builds, affected-project detection, remote caching, and shared task graphs. The theory is sound. The practice depends on discipline and measurement.

Where CI minutes quietly explode

The first failure mode is “run everything, always.” Without affected-project logic, every commit triggers the same wall of tests as if the entire company touched the same line. That might be acceptable in a tiny repo; it does not stay acceptable when packages multiply.

The second failure mode is poor cache keys. Remote caching only helps when inputs are modeled correctly. If your cache key accidentally includes a timestamp, a nondeterministic generator, or an overly broad file glob, you rebuild the world and wonder why the fancy cache never hits.

The third failure mode is dependency graph denial. Monorepos do not remove coupling; they make coupling visible. If every package depends on a shared kitchen-sink library, then every change ripples outward and your incremental story weakens.

Developer experience vs platform economics

Fast local builds feel like a win—and they are—but CI is parallelized differently. A developer might compile only what they touched; CI might still lint, typecheck, and test a broader surface to protect main. The cost model shifts from “my laptop fan” to “metered minutes and queue time.”

That is not an argument against CI; it is an argument for aligning scope. The goal is not the shortest possible job; it is the shortest job that still catches the failures your team actually sees. Blindly parallelizing fifty tasks can increase overhead and obscure bottlenecks.

Signals that your monorepo tooling is working

Healthy setups tend to share a few observable traits. Pull requests that touch isolated packages finish quickly because the graph prunes work. Mainline builds reuse remote cache artifacts across developers. Failures are specific: you get a nameable unit test, not a twenty-minute mystery log.

Another signal is predictable flake handling. Monorepos concentrate complexity; flaky tests become everybody’s problem. Teams that invest in quarantine policies, retry strategies that are not lies, and root-cause fixes keep CI trustworthy. If developers stop believing green checks, they stop trusting the system—and then they rerun jobs “just in case,” which burns minutes faster than any inefficient script.

Signals that you are paying for ceremony

Watch for reruns becoming habitual. Watch for “merge queue took an hour” becoming a shrug. Watch for platform teams adding more checks to compensate for unclear ownership, until every change feels like a compliance ritual.

Also watch for tool sprawl: multiple task runners, overlapping lint rules, and custom scripts that only one person understands. Monorepos consolidate code, but they do not automatically consolidate wisdom. Documentation and templates matter as much as the graph algorithm.

Task runners and the “one graph to rule them all” trap

Popular task runners exist because npm scripts alone do not scale to hundreds of packages. They add orchestration, caching semantics, and sometimes remote execution. The trap is assuming the tool fixes architecture. A beautiful DAG still hurts if the underlying packages are tightly coupled or if tasks declare dependencies incorrectly. Before debating Nx versus Turborepo versus something else, map your actual import graph in the language you ship—TypeScript project references, Go modules, or whatever applies—and reconcile it with what CI believes.

Also distinguish between “build” and “test” graphs. They overlap, but not perfectly. You might skip compiling a package while still needing to run its integration tests when shared contracts change. Your pipeline should encode those rules explicitly, not as tribal knowledge in a few senior engineers’ heads.

Finally, keep an eye on developer laptops. A graph that is too clever for CI but confusing locally will get bypassed with ad hoc commands—and then you lose determinism. Good monorepo tooling should make the same commands work in CI and on a fresh clone, modulo credentials.

Remote caching: when it helps and when it hides problems

Remote caching turns repeated work into reuse. It also introduces a new failure class: stale artifacts. If someone bypasses cache invalidation rules—or if secrets leak into cache keys—you can get confusing “works on my machine” behavior that is actually “works on my warm cache.” Invest in cache observability: hit rates, miss reasons, and the ability to bust safely when needed.

For regulated environments, check whether your cache provider meets data residency expectations. Build logs and artifact metadata can be more sensitive than they look.

Practical mitigation strategies

Invest in affected-project workflows. Make sure your tooling understands your dependency edges. If the graph is wrong, everything downstream is theater.

Tighten boundaries. Split large shared modules when they become merge magnets. Encourage APIs that minimize accidental surface area.

Separate fast and slow feedback. Put cheap checks early; put expensive end-to-end suites on schedules or selective triggers when safe.

Measure queue time, not just duration. A ten-minute job that waits fifty minutes in queue still ruins a day.

Audit third-party CI actions. Small convenience steps add seconds each; seconds add up across thousands of PRs.

Organizational angles people underestimate

Monorepos surface sociotechnical issues. Code ownership blurs; teams need clear guidelines for who approves changes in shared packages. Without ownership, reviews become either rubber stamps or endless debates. Neither helps throughput.

Release strategy also changes. If everything lives together, your versioning story must be explicit: independent deployables, coordinated releases, or a hybrid. Tooling cannot decide philosophy for you.

What to measure on a monthly cadence

Pick a small set of metrics and review them regularly: median pipeline duration for PRs, P95 queue time, flake rate, cache hit rate, and mean time to recover from a broken mainline. Trends matter more than single spikes. If duration creeps up month over month while team size stays flat, you are probably paying interest on architectural shortcuts.

Pair metrics with qualitative checks: survey developers on whether CI failures feel actionable. A noisy signal is a tax on attention, even if it is technically “correct.”

Migration realities: polyrepo to mono and back

Moving into a monorepo is rarely a weekend hackathon. You need import rewriting, history preservation decisions, and CI rewiring. Moving out is equally painful. Treat migrations as programs with milestones: first unify standards, then consolidate code, then optimize the graph. Skipping straight to “we installed a task runner” leaves the hard parts untouched.

A sane decision lens

Stay in a monorepo when your coordination wins outweigh CI costs and your graph stays comprehensible. Consider splitting or layering when teams spend more time fighting the build than shipping product—or when unrelated business units share a repo for political convenience rather than technical coupling.

If you split, do it with migration plans: duplicate code temporarily if needed, carve boundaries, and measure operational overhead on the other side. Multiple repos have their own tax: duplicated CI config, dependency drift, and slower cross-repo refactors.

Closing take

Monorepo tooling is not magic; it is a budgeting exercise in compute, attention, and coupling. The flashy demos show green dashboards and cache hits. The real story is whether your team trusts the pipeline enough to move fast without rerunning the universe on every whim. When CI minutes eat more than they save, the fix is rarely “buy more runners” first—it is to rebuild the graph, tighten boundaries, and remember why you wanted one repository in the first place.

If you take one habit into the next planning cycle, make it this: before adding a new check, estimate its CI cost and its expected defect catch rate. If you cannot ballpark both, you are not ready to institutionalize the step. Monorepos reward clarity—about dependencies, ownership, and what “done” means in a pipeline. Invest there, and the minutes tend to follow. That is how tooling stops eating your team alive.