OpenTelemetry for Solo Developers: Observability Without the Enterprise Bloat

April 8, 2026

OpenTelemetry (OTel) arrives with enterprise baggage: service meshes, vendor halls at conferences, and diagrams with more boxes than a small SaaS has services. Solo developers read that and reach for printf debugging again. That is a shame—because the core ideas are portable down to a single binary on a cheap VPS. You do not need a platform team to benefit from consistent traces, metrics, and logs; you need a ruthless focus on the smallest useful setup.

This article is for indie builders, consultants, and small teams who want observability that scales with complexity but does not eat the weekend. We will strip OTel down to what matters, show where it overlaps with tools you already run, and name the traps that turn “lightweight tracing” into a second job.

If you already run structured logs, you are halfway there. If you already expose Prometheus metrics, you are closer still. OpenTelemetry does not replace those tools overnight—it gives you a path to unify context so you can stop mentally stitching together three tabs during an outage.

What OpenTelemetry actually standardizes

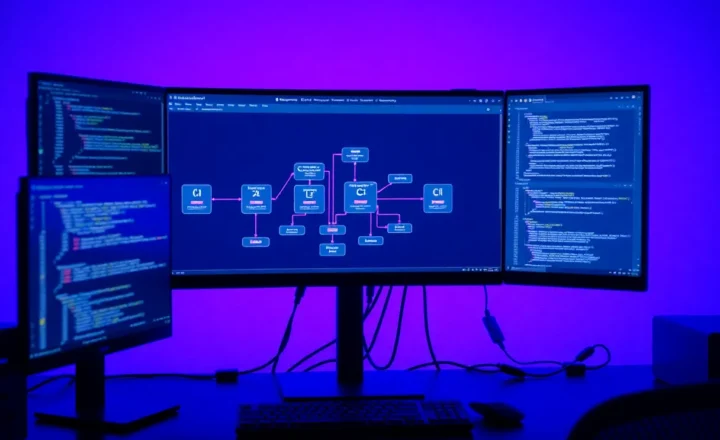

At its heart, OTel defines how instrumentation emits signals: traces for request paths, metrics for aggregates, logs as correlated events. It also defines exporters that send those signals to backends—Jaeger, Prometheus, Grafana Cloud, vendor APMs, or a self-hosted stack.

The win for solos is consistency. Instrument once in your framework, reuse the same patterns across services as you split them, and avoid rewriting observability every time you outgrow a single process.

Why three signals beat one

Logs tell you what happened in words. Metrics tell you how often and how fast in aggregate. Traces tell you where time disappeared along a path. Each signal fails differently: logs drown you in text without structure; metrics hide outliers; traces become expensive if you keep every span. OTel’s value is giving you one vocabulary to combine them instead of duct-taping three ad hoc formats after every outage.

Vendors and lock-in

OpenTelemetry is not a hosted product; it is an SDK and collector ecosystem. Vendors integrate with it because customers demand portability. For solo developers, that means you can start with a free stack and move to paid APM later without rewriting every instrumentation call—if you kept your semantic conventions sane.

The minimum viable solo stack

Start with one backend you will actually look at. A common pattern is Prometheus for metrics, Loki or plain files for logs, and Tempo or Jaeger for traces—or an all-in-one Grafana stack if you want fewer moving parts. The specific brands matter less than the habit of checking them before users complain.

Instrument the edges first: HTTP handlers, database calls, and outbound integrations. Those spans pay rent immediately when latency spikes. Deep instrumentation inside every function can wait until you are hunting a specific bug class.

Single binary apps: keep it boring

If you run one process behind a reverse proxy, you can defer distributed tracing complexity and still win with structured logs plus RED metrics. Add a trace ID to requests early and propagate it through your logger. When you split into workers or microservices later, OTel grows with you instead of forcing a rewrite.

Containers and compose files

Docker Compose is enough for many solo stacks: app, collector, metrics store, trace store, Grafana. Pin versions, snapshot configs in git, and document how to bring the stack up after a laptop dies. Observability that cannot be recreated is observability that evaporates in emergencies.

Where solo developers over-engineer

Over-engineering looks like running five collectors, three agents, and a sidecar when a single OpenTelemetry Collector could fan out signals. It looks like tracing every loop in hot code before measuring overhead. It looks like building custom dashboards before you know which questions you ask weekly.

Under-engineering is the opposite trap: shipping without request IDs, then wondering why production cannot be correlated across nginx, app, and worker logs. OTel is a structured way to avoid that dead end.

Healthy middle ground: one collector config checked into git, one dashboard you open weekly, and a rule that new dependencies get instrumented before launch. Small systems stay small only if you refuse silent regressions.

Costs you should budget honestly

CPU overhead is usually modest if you sample traces and avoid high-cardinality label explosions. Storage costs dominate when you retain everything forever. Set retention aggressively short for traces, longer for metrics, and treat logs as something you can afford to grep—not a permanent archive unless regulations say otherwise.

Your time is also a cost. If observability setup exceeds a day without producing one actionable alert, pause and simplify.

Cardinality traps

High-cardinality labels—user IDs on every metric, unbounded URL paths—will cheerfully bankrupt Prometheus-style systems. Prefer bounded labels: route templates, status classes, dependency names. Put unique identifiers in traces and logs where sampling protects you.

Framework integration shortcuts

Most mainstream web frameworks have OTel middleware. Use official auto-instrumentation when possible; hand-roll spans only where they clarify domain work—payment capture, risky migrations, third-party webhooks. The goal is signal, not ceremony.

Semantic conventions save your future brain

OTel defines semantic attributes for HTTP, database systems, messaging, and more. Using standard names helps tools render dashboards automatically and makes your spans comprehensible when you return to the project six months later. Inventing custom attribute names for everything is free at write time and expensive at read time.

Sampling strategies that respect your wallet

Keeping every trace is tempting; it is also how you store gigabytes of “healthy” requests. Use head-based sampling for defaults and tail-based sampling when your backend supports it—keep errors and slow traces, discard the median happy path. If you cannot tell whether a trace was sampled, document the policy next to your dashboards so future-you does not misread graphs.

Alerts that do not wake you for vanity

Solo operators should prefer lagging indicators that predict pain: error rate jumps, sustained latency, disk fullness, queue depth. Avoid alert spam; nothing trains you to ignore pages faster than noisy thresholds.

Runbooks on a postcard

Every alert should link to a three-step runbook: what it means, what to check first, what safe mitigation exists. If you cannot write that, the alert is not ready. Solo does not mean informal—it means concise.

When to pay for hosted observability

Hosted vendors make sense when uptime matters more than dollars and when you lack time to patch storage clusters. Keep export paths open; OTel makes switching cheaper than proprietary agents of the past.

Debugging workflows that actually work alone

When something breaks, start from a trace if you have it—identify the slow span. If not, pivot to logs with a request ID. If neither exists, add instrumentation before you fix the bug twice. Repeat until your first step is data, not guessing.

Background jobs and queues

HTTP requests are only half the story for many apps. Workers that process webhooks, emails, or cron tasks need the same IDs propagated through your queue. If a job retries, your traces should say so. If poison messages exist, metrics should show growing backlog. OTel’s baggage and context propagation concepts exist precisely so those hops do not become blind spots.

Kubernetes without a platform team

If you run a tiny cluster, you can still benefit from node and kube-state metrics without adopting every CNCF project. Export cluster metrics, scrape your services, and resist installing five overlapping agents. The goal is visibility into scheduling failures and pod restarts—not a resume-driven architecture.

Security and secrets

Telemetry can leak sensitive data if you log raw payloads or attach PII to span attributes. Treat instrumentation like production code: redact tokens, scrub emails unless necessary, and review exporters’ defaults. A trace is easier to read than a database dump—and that is exactly why you must be careful.

Testing your observability

Schedule periodic chaos-lite drills: inject latency into a dependency in staging, trip a failing healthcheck, verify alerts fire to the right channel. If you only test observability during real outages, you are practicing firefighting without a hydrant map.

Living alongside legacy logging

You do not need a big-bang rewrite. Run OpenTelemetry alongside your current logger until correlation IDs line up. Migrate noisy printf statements gradually. Deprecate duplicate metrics once dashboards prove the new ones match. Incremental adoption beats a month-long branch that never merges because “observability isn’t a feature.”

Closing take

OpenTelemetry is not enterprise-only—it is a lingua franca you can adopt incrementally. Start small, export to a backend you will actually open, and expand instrumentation when real incidents justify it. Observability is not about collecting everything; it is about answering the next question faster than printf and hope.

Pick one Friday to review last week’s traces for your slowest endpoint—even if nothing broke. That habit turns observability from insurance into a performance roadmap. Solos do not have a perf team; they have graphs and discipline.