Make.com vs Custom Code for Data Pipelines: When Visual Automation Stalls

April 6, 2026

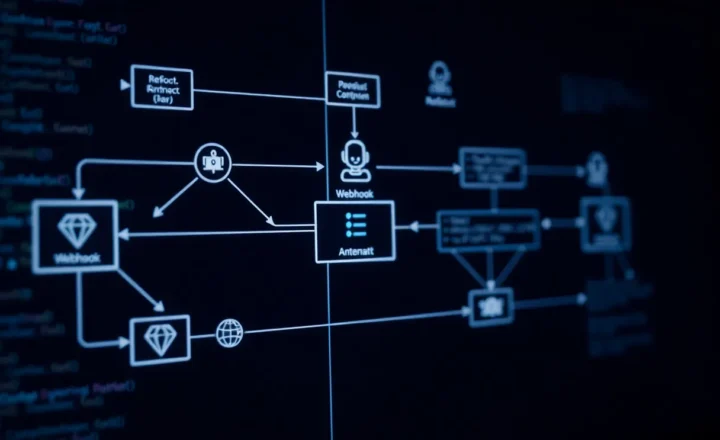

If you have ever watched a demo where someone drags a few blocks on a canvas and suddenly Slack messages, spreadsheets, and CRM records all move in perfect harmony, it is easy to believe visual automation has solved data pipelines for good. Tools like Make.com (formerly Integromat) are genuinely impressive at turning messy business logic into something you can see, test, and hand off to a teammate who does not live in a terminal.

Then reality arrives: a webhook fires twice, a vendor changes an API field, or you need a transform that is three nested loops and a dedupe step away from what the friendly purple module supports. At that moment, the same canvas that felt liberating starts to feel like a cage with very polite walls.

This article is not a rant against no-code. It is a practical map of where visual automation shines, where it tends to stall, and how to decide—without ideology—when custom code (or a hybrid) is the cheaper path in the long run.

What we mean by a “data pipeline” here

In the broadest sense, a data pipeline moves information from A to B (and often through C, D, and E) with some cleaning, enrichment, or routing along the way. That might mean syncing leads from a form into a warehouse, normalising product feeds, aggregating analytics from half a dozen SaaS tools, or keeping two systems eventually consistent when neither was designed to talk to the other.

Visual platforms excel when the pipeline is mostly integration: reliable triggers, well-documented APIs, and transformations that fit the mental model of “take this JSON field, map it to that column.” Custom code excels when the pipeline is mostly computation or control flow: branching logic that depends on historical state, bespoke error handling, performance tuning, or operations that need to be reasoned about as a single program rather than a chain of boxes.

Where Make.com-style tools win

Speed to first working path. If your goal is to prove value in days—not weeks—a visual router between two mature SaaS products is hard to beat. You get logging, retries, and scheduling without standing up infrastructure. For many small teams, that is the difference between automation happening at all versus living forever on a backlog.

Visibility for non-engineers. Operations, marketing, and support teams can follow a scenario, comment on it, and even fix a broken mapping when the engineer is on holiday. The canvas is a shared artefact in a way that a Git repository rarely is for the whole company.

Vendor-maintained connectors. When a popular integration breaks, you are rarely the only customer affected, and the platform vendor has incentive to ship a fix or workaround. That is underrated compared to maintaining your own OAuth dance with a CRM that “minorly” revamps its developer portal every eighteen months.

Guardrails. Opinionated modules reduce footguns: you are less likely to accidentally open your database to the world because someone pasted a quick Express route into production. For organisations with limited security review capacity, that friction can be a feature.

The stall points: when the canvas stops being honest

The trouble starts when your scenario stops looking like a straight line and starts looking like a program that someone drew with stickers.

Complex branching and state. Real pipelines often need to remember what happened last Tuesday, reconcile partial batches, or skip records based on a combination of fields that no single module exposes cleanly. You can usually force this with routers, filters, and data stores, but each layer adds latency, cognitive load, and places where behaviour diverges from what you think you built.

Performance and cost at scale. Visual platforms typically charge per operation. That is predictable until it is not. A five-minute poll that fans out across thousands of rows can turn a “cheap experiment” into a line item that finance asks uncomfortable questions about. Code running on your own worker or a managed job system often has a different cost curve: higher upfront engineering, lower marginal cost per record once tuned.

Testing and environments. Mature engineering teams want versioned changes, pull requests, and automated tests. Visual tools have improved here, but the experience still lags behind text-based workflows for diffing, mocking external services, and reproducing a production bug on a laptop. When releases feel scary, people stop shipping improvements—and the pipeline ossifies.

Observability depth. Dashboards and execution history are great for “why did this run fail?” They are weaker for “why is this slower than last month?” or “show me the p95 of this transform across ten thousand runs.” Custom code lets you attach the same tracing, metrics, and structured logging you use everywhere else.

Edge cases become product features. The moment you start asking the platform to be a general-purpose runtime—recursive parsing, custom cryptography, heavy numerical work—you are fighting the tool. You might win, but you will not enjoy it.

What custom code buys you (and what it costs)

Writing a pipeline in Python, Node, Go, or another language you already operate well gives you fine-grained control: deterministic behaviour, libraries built for data work, and the ability to refactor messy logic into functions with real names instead of “Set variable 7.”

The costs are familiar but easy to underestimate: deployment, secrets management, dependency updates, on-call rotation, and the slow drift of “just a script on a VM” into tribal knowledge nobody wants to touch. Custom code is not automatically more reliable; it is more flexible. Flexibility without discipline becomes a different kind of stall.

Hybrid approaches are often the adult compromise: use Make.com or Zapier (or similar) for the outer glue—triggers, human-in-the-loop approvals, notifications—and hand off heavy lifting to a small HTTP service or queue worker you own. The visual layer stays readable; the gnarly part lives where tests and profilers live.

A decision framework you can actually use

Before you pick a side, answer these questions on paper. If you are honest, the answer is rarely pure no-code or pure code.

- How often will this change? Weekly tweaks favour visual tools if the changes stay shallow. Monthly structural changes favour code if you need reviewable diffs and automated checks.

- What happens if it fails silently? If the worst case is a delayed newsletter, a visual retry might be enough. If the worst case is incorrect financial reporting, you probably want typed schemas, idempotency keys, and tests.

- What is your realistic scale in twelve months? Order-of-magnitude matters. Ten times the volume can flip the economics of per-operation pricing or expose bottlenecks you did not measure at small N.

- Who maintains it? A pipeline owned by engineers should look like engineering work. A pipeline owned by operations should be operable without paging someone who dreams in stack traces—sometimes that means more visual tooling, sometimes it means better internal abstractions, not fewer.

- Can you draw the happy path on one screen? If yes, stay visual longer. If you need three screens and a legend, you already have a program; you are just drawing it the hard way.

Concrete scenarios (and where they land)

New lead arrives from a form: validate email format, dedupe against a simple list, post to Slack, and create a CRM contact. This is the sweet spot for visual automation—few branches, well-supported modules, and failures that are easy to spot in a channel.

Hourly sync of inventory between an ERP and a storefront: still plausible in a visual tool if record counts are modest and the APIs are stable. The moment you need conflict resolution (“warehouse says 3, ecommerce says 5, last edit wins is wrong”), you are encoding business rules. That is code-shaped work even if you build it with routers.

Streaming or near-real-time analytics: usually a poor fit for classic scenario runners. Batching, backpressure, and exactly-once semantics do not map cleanly to “one execution per bundle.” Here you want a stream processor, a warehouse load, or at minimum a queue and workers you control.

One-off CSV cleanup for a campaign: do not spin up Kubernetes. A notebook or a fifty-line script in a repo you can throw away is often faster than prettifying a canvas nobody will revisit. The lesson is about lifespan: long-lived paths deserve engineering discipline; ephemeral chores deserve the smallest tool that is still safe.

Migration without drama

When you outgrow a scenario, treat the move like any other system migration: shadow outputs, compare aggregates, and cut over with a feature flag or parallel run. The visual workflow is your specification—annoying, but useful. Export execution logs as fixtures and replay them against the new service. The goal is not purity; the goal is confidence.

Document the implicit assumptions while you still remember them: rate limits, retry semantics, and which fields are nullable in practice versus in the vendor’s marketing screenshot. Those details are the real spec; the boxes on the canvas are just one rendering of it.

If you keep a thin visual layer after migration, use it only for what it is good at—human-friendly triggers and alerts—rather than re-creating the entire pipeline twice. Duplication across systems is how teams end up with two sources of truth and zero people who trust either one.

Bottom line

Make.com and its peers are not “toys.” They are legitimate integration layers that compress time-to-value and democratise automation. They stall when your problem stops being integration and starts being software engineering in disguise—stateful logic, strict correctness, performance envelopes, and deep observability.

Pick the tool that matches the shape of the work, and do not let aesthetics decide. A neat canvas is not a substitute for a correct pipeline—but a pile of microservices is not a substitute for shipping, either. The best teams mix both on purpose, not by accident.