Indie SaaS Pricing Tests That Still Work in 2026 (Without Race-to-Bottom Discounts)

April 6, 2026

Pricing experiments on an indie SaaS used to be simple: change a number, watch sign-ups for a week, declare victory, and move on. In 2026 the environment is noisier—buyers compare plans across tabs, annual discounts are table stakes in some categories, and AI-assisted tools have inflated expectations about what software “should” cost. The old playbook of slashing prices to learn anything is not just lazy; it trains the wrong customers to wait for the next coupon.

The good news is that several tests still produce signal if you design them around value, fairness, and retention—not vanity top-of-funnel metrics. Below are approaches that remain legitimate for small teams, plus the traps that make results lie to you.

Start with the question you are actually trying to answer

Every pricing test should map to one primary decision: Are we leaving money on the table? Are we losing deals on trust rather than price? Is our packaging confusing power users? Are we attracting customers who churn once the promo ends? If you cannot state the decision in a sentence, you are not running an experiment—you are rearranging deck chairs.

Write that sentence before you touch the pricing page. It will keep you from running five overlapping changes and arguing about which one “worked.”

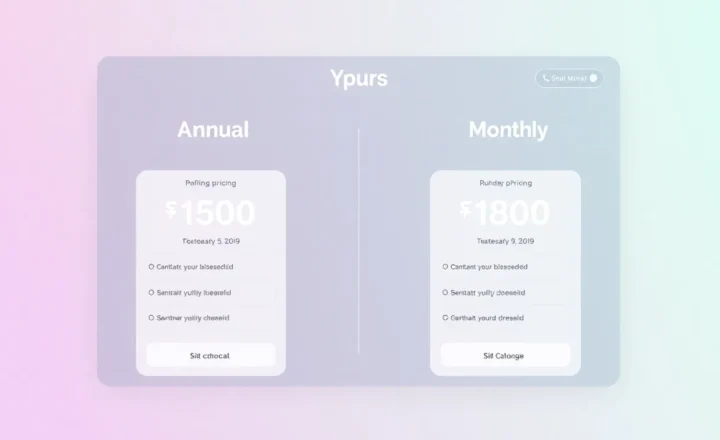

Test 1: Packaging, not just the sticker price

Moving a dollar amount up or down is the shallowest lever. A more durable 2026-friendly test is whether customers understand what they get at each tier. Try:

- Moving a feature that power users need from the middle tier to the top tier (or the reverse) while holding headline prices steady.

- Replacing vague “Pro” labels with outcome-based names (“For teams publishing weekly” vs “For solo creators”).

- Splitting one crowded tier into two clearer plans with smaller price gaps, even if average revenue per user moves only slightly at first.

Packaging tests work because they change who selects which plan, not just how much the same person pays. Measure upgrade rate, downgrade rate, and support tickets asking “which plan do I need?” Those are your leading indicators that the page is doing its job.

Test 2: Annual prepay framed as certainty, not desperation

Deep evergreen discounts teach customers that your list price is fictional. Instead of racing to the bottom, test annual billing as a risk reduction story: locked-in features, predictable renewals, onboarding credits, or a modest but honest months-free equivalent (for example, two months off when paying yearly—clear, bounded, easy to explain).

Compare churn at month three and month twelve between cohorts who joined on monthly versus annual. If annual users stickier, your discount is buying retention, not just cash flow. If they churn at the same rate, your annual push might be subsidising the wrong segment.

Test 3: Usage-based add-ons instead of blanket cuts

When prospects say “too expensive,” indie founders often hear “lower the price.” Sometimes they mean “I do not see why I should pay for seats or API calls I will not use.” A controlled test is to introduce metered overages or à la carte modules for heavy behaviours—extra workspaces, higher export limits, priority sync—while keeping the core plan’s headline price stable.

This approach preserves perceived value, captures revenue from the customers who stress your costs, and avoids signalling that your baseline product is worth less. Watch support load: if metering confuses people, simplify the copy before you simplify the math.

Test 4: The “concierge onboarding” surcharge (or credit)

For products where setup friction kills conversion, test an optional paid onboarding session or a higher tier that includes white-glove setup—in parallel with a self-serve path. You are measuring whether a subset will pay for time saved, which informs whether services belong in your revenue model at all.

This is especially relevant when AI features promise magic but still require human judgement to configure well. Some buyers want hand-holding more than a 20% off coupon.

Test 5: Grandfathering as a deliberate policy, not an accident

Raise prices for new customers only, keep loyal accounts on legacy rates for a defined period, and communicate the timeline. The test is not “will people complain?”—they might—but whether net revenue grows and retention among long-time users stays stable. Done transparently, this reinforces trust: existing customers see that you reward commitment; new customers pay today’s value.

Document the policy in plain language on your site or changelog. Ambiguous grandfathering creates resentment; explicit rules create predictability.

What to measure so you are not fooling yourself

Signup counts alone are a vanity trap. Pair every pricing change with:

- Qualified trials or activations—did people reach the “aha” moment?

- Net revenue retention or simple cohort MRR—are new price points attracting churn-prone bargain hunters?

- Support and refund reasons—price objections often mask usability or expectation gaps.

- Time-to-payback on paid ads—if you run small campaigns, a “cheaper” plan that converts worse can still destroy unit economics.

Use minimum run lengths that respect your sales cycle. A two-day test on a product with a fourteen-day trial is astrology, not analytics.

Ethical guardrails that also improve signal

Do not hide cheaper plans from eligible users, do not fake countdown timers, and do not quote fake “original” prices. Regulatory attention aside, deceptive urgency attracts customers you do not want to support. Clean experiments outperform cynical ones over a multi-year horizon because they do not poison your brand’s reference points.

Tests that usually waste your runway

Random percentage-off pop-ups. They spike trials from people who were on the fence about trying, not about paying. You learn little about willingness to pay at full price, and you anchor every future conversation around the lower number.

Changing currency display tricks. Rounding psychology (ending prices in 7 or 9) can nudge, but it is rarely the difference between a healthy business and a struggling one. Treat micro-format tweaks as polish after packaging and value proof are solid.

Competitor price matching without feature parity. If you chase a larger rival’s headline price while offering a narrower product, you inherit their number without their economies of scale. Match on outcomes, not on digits alone.

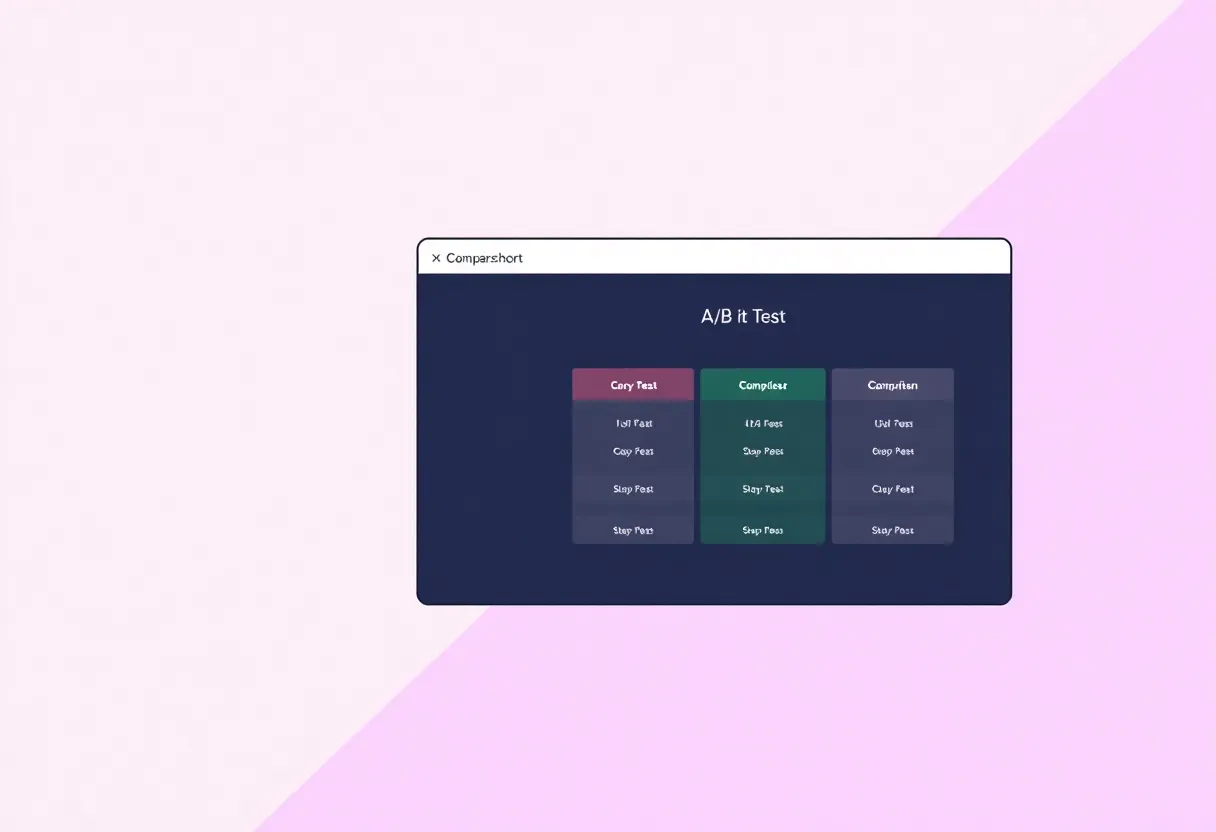

One-week multivariate chaos. Changing headline, annual toggle, feature table, and testimonial order simultaneously guarantees you will not know what moved the needle. Serialise changes or use proper experiment design if you truly have the traffic—which most indies do not.

When discounts still make sense

Limited, bounded incentives remain useful: launch cohorts for early believers, nonprofit programmes with clear eligibility, or partner bundles where someone else is co-marketing. The distinction is structural—those discounts are programmes with rules, not a permanent lever you pull whenever growth wobbles.

Another defensible pattern is rewarding behaviour you want more of: education (student plans), annual planning (pay early for the next fiscal year), or migration from a sunset competitor. The customer still feels seen; you still protect list price for everyone else.

A lightweight checklist before you ship a change

- Did we update help docs and in-app upgrade prompts so they match the new tiers?

- Do sales or support have a one-paragraph explanation they can paste when someone asks “what changed?”

- Are analytics events named so we can segment pre/post cohorts without spreadsheet archaeology?

- Did we set a calendar reminder to review churn and expansion thirty and ninety days out—not just conversion at seven days?

If any box is unchecked, you are still allowed to launch, but you should expect noisier data and more firefighting. Pricing touches every team that talks to customers, even when your company is just you wearing four hats.

Putting it together

In 2026, indie SaaS pricing power comes from clarity: packaging that matches jobs-to-be-done, annual offers that buy loyalty rather than desperation, optional monetisation of heavy usage, and transparent policies when prices rise. The tests that still work are the ones that treat customers like they will still be around next year—because that is exactly the cohort you are building.

You do not need enterprise-grade experimentation infrastructure to learn faster than competitors—you need discipline about what you change, why it should matter, and how long you will wait before calling a result. That restraint is rarer than any coupon code—and it compounds.