Local LLM Inference on Apple Silicon: Unified Memory as a Performance Wildcard

April 8, 2026

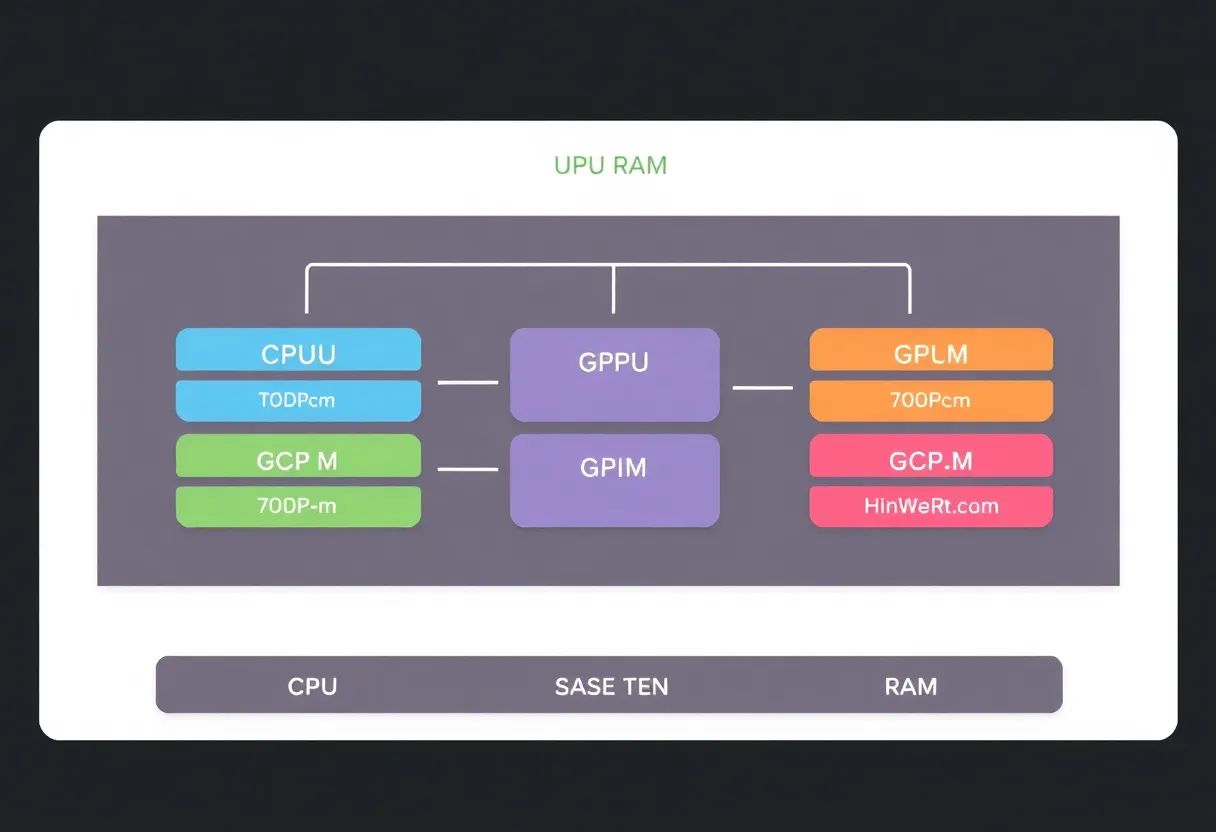

Running large language models on a laptop used to be a joke. Apple Silicon changed the punchline: wide memory buses, efficient cores, and unified memory between CPU, GPU, and Neural Engine classes of workloads mean a MacBook can host surprisingly large weights—if you understand what unified RAM actually buys you and where it still says no.

Why unified memory matters for LLMs

Transformer inference is memory-bandwidth bound as much as compute bound. Traditional discrete-GPU laptops shuttle weights across PCIe; Apple’s unified pool lets accelerators and CPU share the same bytes without copying across a narrow link—when the software stack cooperates. That cooperation is not automatic; frameworks must target Metal and Metal Performance Shaders paths, and llama.cpp-family runners mature on macOS precisely because developers chased this advantage.

Model size versus RAM reality

Quantization (4-bit, 5-bit, 8-bit) trades quality for footprint. A “13B” model is not 13 gigabytes of RAM in operation—activations, KV cache, and framework overhead add headroom. Rule of thumb: budget generously, watch swap thrash, and prefer models that fit comfortably below your physical RAM if you value responsiveness. Swap on SSD is better than swap on spinning rust; it is still slower than keeping the working set hot.

Throughput versus latency

Batching improves tokens per second for offline jobs; interactive chat wants predictable latency. Tune context sizes—monster prompts look cool until prefill eats your budget. For coding assistants, retrieval plus smaller local models sometimes beats a single huge model on the same silicon.

Thermal envelopes and sustained clocks

Laptops throttle. A 30-minute benchmark is not a three-hour coding session. Monitor power metrics, elevate the chassis, and remember fans are part of the algorithm. Silent mode trades peak tok/s for comfort; pick consciously.

Software stacks: moving targets

llama.cpp, MLX, and vendor experiments evolve weekly. Pin versions, read release notes, and snapshot working combinations. Nothing hurts reproducibility like “it worked yesterday” without a lockfile for your model weights checksum.

Privacy and compliance upsides

Local inference keeps prompts off third-party servers—valuable for NDAs and regulated data. It does not remove responsibility: logging, disk encryption, and screen shoulder-surfing still matter. Air-gapped is a process, not a checkbox.

When cloud still wins

Giant context windows, frontier multimodal models, and elastic scale belong in datacenters. Apple Silicon local models shine for drafts, offline travel, and cost control—not for every task. Hybrid workflows—small local model for triage, cloud for heavy lifting—are often optimal.

Unified memory is not magic; it is a better hose between parts that used to choke on PCIe. Respect RAM budgets, measure real sessions, and treat local LLMs like any performance-sensitive service: profile first, argue about frameworks second.