Monorepo CI Time vs Developer UX: Where Turborepo Stops Helping

April 8, 2026

Monorepos promise one repository to rule them all—shared libraries, consistent tooling, atomic refactors across packages. They also concentrate pain: a single pull request can fan out into CI jobs that rebuild half the company. Task runners like Turborepo and Nx exist to make builds incremental, cacheable, and parallel. They help—until the graph gets weird, secrets leak into caches, or developers wait on remote cache misses that feel like dial-up. This article separates what these tools fix from what they cannot: organizational boundaries, flaky tests, and the human cost of long feedback loops.

Names in this space proliferate—pnpm workspaces, Yarn Berry, Rush, Bazel, Buck2—but the user-facing question stays constant: how fast can a developer know they are safe to merge?

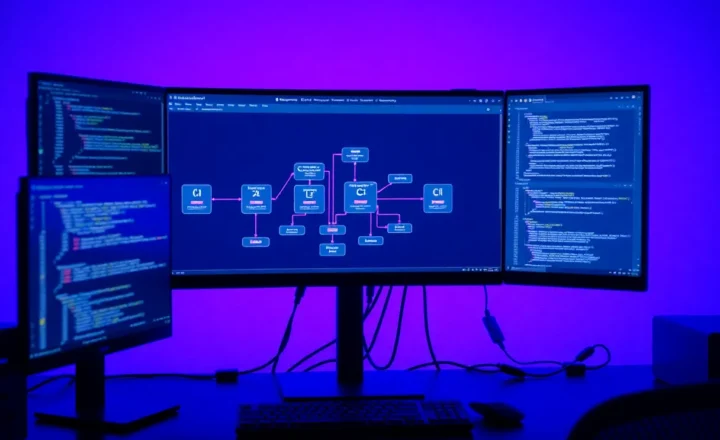

The shape of the problem

In a polyrepo world, each service owns its pipeline; blast radius is smaller, duplication is larger. In a monorepo, shared infrastructure means shared queues. CI time becomes a coordination tax: every minute in the critical path is multiplied across engineers who touch the same graph.

“Works on my machine” escalates to “works in my partial graph.” Task orchestration tools attack that by modeling dependencies between packages and tasks, skipping unchanged work, and distributing cache across machines.

What Turborepo-style caching actually does

At a high level, these systems hash inputs—source files, environment variables declared as dependencies, lockfiles—and map hashes to outputs. Cache hits short-circuit compilation and tests. Remote caches let CI share artifacts with laptops and vice versa, shrinking cold starts.

Teams sometimes misunderstand remote cache as “magic cloud compile.” It is not—it is a content-addressed store. If two developers get different outputs from identical hashes, you have a bug in task definitions or toolchain drift (Node patch versions, JDK builds). Pin toolchains; use Volta, nvm, or asdf manifests and include them in cache keys.

That model is powerful when tasks are pure: deterministic given inputs. It breaks when tasks reach out to the network without declaring it, embed timestamps, or write randomness into outputs. Suddenly you are debugging nondeterminism masked as “sometimes cache miss.”

Where developer UX still suffers

First, affected logic is only as good as your dependency graph. Misdeclared dependencies mean either too much work (safe but slow) or too little (fast but dangerous). Second, long-running tests at the top of the graph block merges—caching does not fix tests that must run every time because they guard invariants. Third, developer laptops are not CI farms: local parallelism hits thermal limits; remote execution helps but adds setup.

Tooling cannot negotiate product deadlines or split teams. If two orgs share a repo but not priorities, you get sociotechnical contention that manifests as pipeline conflict storms.

Local development loops vs CI fidelity

Developers want sub-second feedback for trivial edits and minute-scale for integration checks. CI wants reproducibility. Those goals collide when local shortcuts—mock servers, stubbed env—diverge from production-like harnesses. Task runners help align commands (`turbo run test` locally mirrors CI) but cannot fix semantic drift between environments.

Preview environments and ephemeral databases narrowed the gap, yet monorepos amplify configuration surface area. Version skew in shared packages can pass locally if node_modules resolution differs—lockfile discipline and consistent package managers matter as much as caching.

Flaky tests defeat every optimizer

A nondeterministic test poisons confidence: teams rerun pipelines, bust caches, or mark tests quarantine. No hash-based cache can make flaky suites cheap; it hides work until failure surprises you late. Fix or delete flaky tests before buying more build hardware.

CI minutes are not free

Cloud bills scale with parallelism and artifact storage. Remote caches grow; retention policies matter. Security teams ask whether cache buckets could leak build artifacts across branches—configure isolation, signed URLs, and branch-scoped namespaces.

Also watch egress: large artifacts pulled repeatedly from remote cache to ephemeral runners can rival recomputation costs. Compression, chunking, and keeping artifacts lean beat brute-force caching.

Ownership and CODEOWNERS reality

Monorepos without clear ownership become everyone’s problem—merge queues stall while unrelated reviewers get pinged. Strong CODEOWNERS, package boundaries, and RFC processes reduce thrash. Tools accelerate builds; they do not assign accountability.

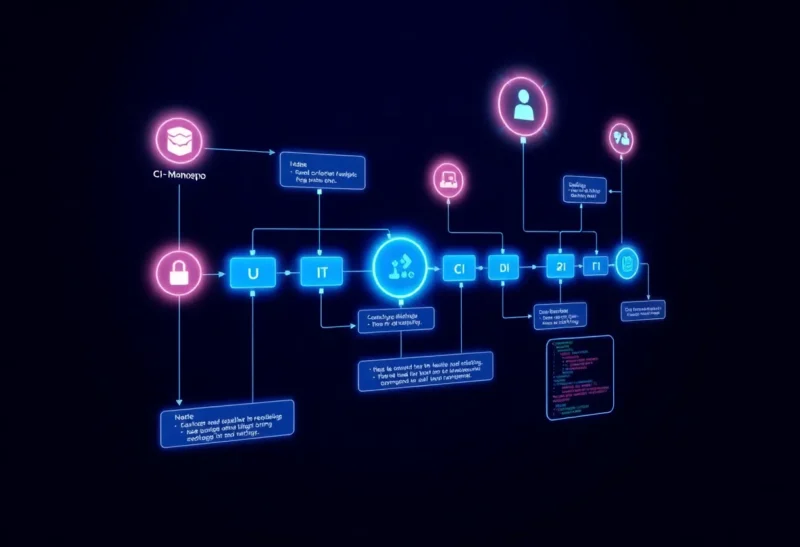

Patterns that keep UX acceptable

- Layered CI: fast lint/typecheck on every push; heavy integration tests on a schedule or nightly unless touched.

- Sharding: split tests by package ownership to reduce single queue bottlenecks.

- Merge queues: batch merges to amortize validation—trade latency for throughput.

- Explicit env contracts: document which env vars participate in hashes; forbid undeclared reads.

- Path filters: CI triggers scoped to changed paths—careful with shared infrastructure packages.

Path filters are a double-edged sword: they speed CI until someone changes a shared logger and half the repo needed testing but did not get it. Combine with dependency-aware analysis rather than naive directory globs.

When to reconsider boundaries

If distinct products share little code but share CI, maybe they should not share a repo. Extraction is costly; so is eternal build time. Treat repository splits as architecture decisions, not taboos.

Conversely, if teams duplicate CI config across dozens of repos, you might consolidate templates rather than code—shared actions/pipelines with per-repo knobs—gaining hygiene without mega-graph contention.

Measuring what developers feel

Track time-to-first-signal (lint), time-to-mergeable green, and rerun rate after failures. Cache hit ratio is a means; developer calendar time is the end. Survey developers quarterly—metrics miss frustration from flaky queues.

Choosing orchestration: Turborepo, Nx, Bazel-flavored flows

Turborepo leans into JavaScript/TypeScript ecosystems with minimal config and strong remote-cache ergonomics—great when your graph is npm workspaces shaped and you want incremental adoption. Nx adds deeper project graph introspection, generators, and enforcement modules—helpful when many apps share Angular/React scaffolds and you want policy linting on dependencies. Bazel-style tools trade flexibility for hermeticity—powerful at Google-scale, heavier to adopt for mid-size web shops.

No winner is universal; the winner is the one your team will maintain. A modest Makefile with honest targets sometimes beats a half-configured graph nobody dares touch.

Docker, hermetic builds, and cache friendliness

Containerized builds improve parity but can invalidate caches if image tags float. Pin base images, layer dependencies above source copies, and avoid baking secrets into layers. Multi-stage builds help separate install from compile, shrinking invalidation surfaces.

Self-hosted runners vs cloud elasticity

GitHub-hosted runners are convenient; self-hosted runners reduce queue wait when you control hardware—at the cost of patching and isolation. Monorepos that burst parallelism may need larger pools or autoscaling groups; otherwise merges pile up at rush hour.

Migrations: incremental beats big bang

Moving into a monorepo—or out—should be phased: start with shared libraries, add apps after pipelines stabilize. Dual-write periods hurt; communicate timelines. Task runners can help by letting teams opt packages into the graph gradually.

Developer onboarding and the “clone to first green” metric

New hires measure culture in hours-to-first-PR. Document prerequisites, provide devcontainers or Nix flakes if you can, and script bootstrap. A 30-minute README beats a three-hour tribal knowledge tour—especially across time zones.

Polyglot edges: mobile, native, and backend packages

JavaScript monorepos often host React Native or Expo apps that still call Xcode and Gradle. Those toolchains do not always respect the same cache semantics—native pods and SDK downloads can dominate time. Consider isolating mobile builds with separate pipelines that reuse artifacts (derived data caches, Gradle remote cache) tuned for those ecosystems instead of forcing everything through one generic graph without understanding native invalidation.

Similarly, Go or Rust services living beside TS packages need distinct linters and compilers—unify orchestration at the “task” level, not by pretending one compiler fits all.

Incident response when CI becomes the incident

When queues back up, communicate transparently: incident channel, workaround (merge freeze, manual deploy), and postmortem. Treat CI as production infrastructure; it gates revenue. On-call for build cops is unglamorous but prevents multi-day merge freezes.

Bottom line

Turborepo, Nx, Bazel-inspired flows, and friends are force multipliers for disciplined graphs. They are not fairy dust for organizational coupling. Invest in accurate dependencies, deterministic tasks, and tiered CI; measure p50/p90 preview times; and remember developers experience the product as time-to-green, not your cache hit rate dashboard. Speed is a feature—own it explicitly.

Finally, celebrate wins: when you cut median CI time materially, tell the org why—teams adopt guardrails when they see payoff, not when infra mutters about graphs.