How One Ransomware Attack Changed How I Think About Backups

February 25, 2026

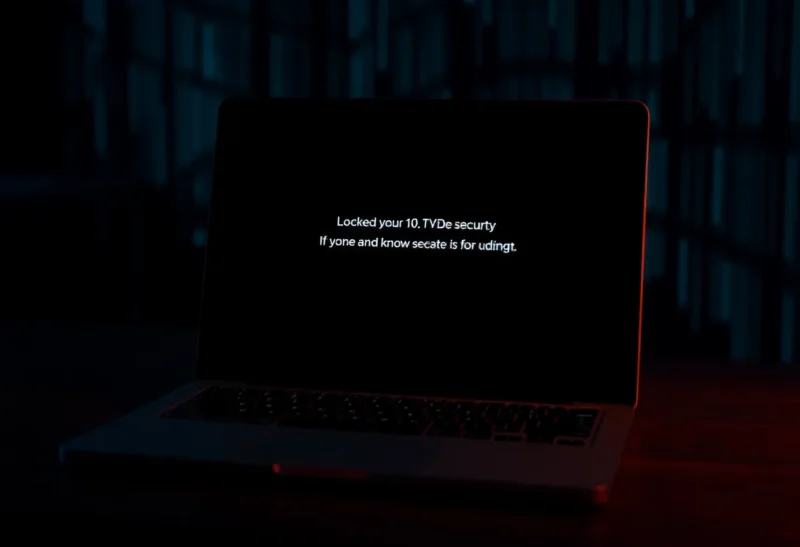

I thought I had backups. Then ransomware hit, and I learned the hard way that “having backups” and “having backups that survive an attack” are not the same thing. One incident changed how I think about what to back up, where to keep it, and how often to test.

What Went Wrong

The attack wasn’t sophisticated. A compromised credential, a few hours of encryption, and everything on the main file server—including the backup destination that was mounted and writable—was gone. The backup software had been writing to a share on the same network. When the ransomware ran, it encrypted that too. I had copies; they just weren’t offline or immutable. I’d optimized for convenience and restore speed, not for surviving a live attacker who could see the same network I could.

That’s the lesson I keep coming back to: backups that are always online and always writable are vulnerable to the same event that takes down your primary. Ransomware doesn’t care that a volume is “the backup.” If the backup is reachable from a compromised machine, it can be encrypted or deleted. The only backups that count are the ones the attacker can’t touch.

What I Changed

I moved to a model where at least one copy is offline or immutable. For me that means a set of drives that are only connected during the backup window, and a cloud backup that uses object lock or a similar “can’t be deleted for X days” feature so that even a compromised account can’t wipe the backup immediately. Multiple copies are still good—3-2-1 or whatever rule you like—but the critical change is that at least one copy can’t be modified by the same attack that hits production.

I also test restores more often. I used to assume that because backups were running and reporting success, they would work when I needed them. Now I pick a random file or directory every few weeks and actually restore it to a different location. It’s boring work, but it’s the only way to know that the pipeline actually works end to end. The first time I did it after the incident, I found a permission issue that would have slowed a real recovery. Better to find that on a Tuesday afternoon than during an emergency.

The Mindset Shift

Before, I thought about backups as insurance against hardware failure or accidental deletion. Now I think about them as insurance against “something has full access to my systems and wants to destroy or hold my data hostage.” That changes the design. Air-gapped or offline copies, immutable storage, and regular restore tests aren’t overkill—they’re the minimum for taking backups seriously. One ransomware attack was enough to make that obvious. I don’t want to learn it twice.